March is once again upon us. Daylights savings has started, conference tournaments have wrapped up (shout out to Penn St for their tournament title appearance!), and it snowed this morning in Delaware. Ah spring… here at last.

Building on my work from last year, I’ve set out again to create a model to predict March Madness, and wanted to test a few hypotheses on how to improve.

1. The model

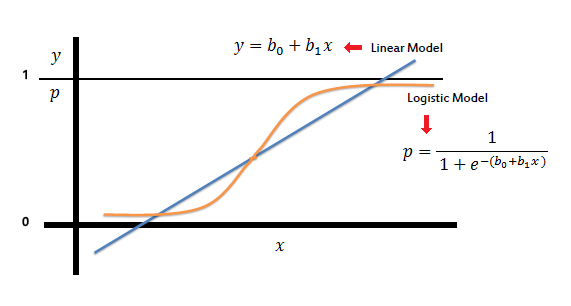

Previously I used a logistic regression to estimate the outcome, here, the probability of winning. This type of model is likely to be better than a linear model since high and low values of a given variable “x” might lead to a negative probability of winning or a probability >1. This is impossible! (See below)

This year, I wanted to investigate several other types of models, and compare their accuracy against this baseline. I’ll try to give an overview of each type of model without getting in the weeds too much :)

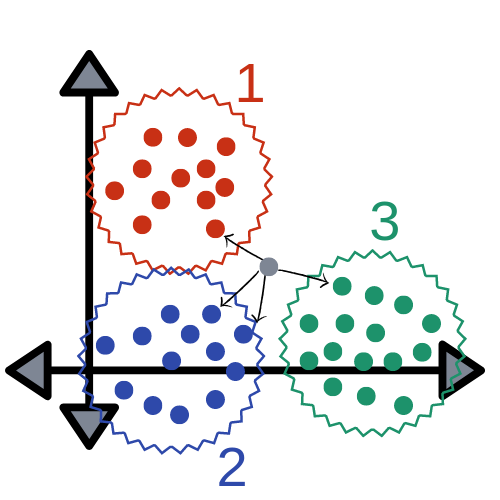

KNN Clusters

A K-Nearest Neighbors model attempts to create groupings of data and fit a new data point within these grouping to estimate a more likely value for y.

The assumption here is there are groupings of outputs. So for this topic, a back and forth, high tempo, high scoring game between two great offenses might just favor the team with the slightly better defense. And a slow, methodical, low scoring game might favor the slightly better offense. However, in a traditional regression, these would be conflicting effects on the outcome (who won? the team with the better offense or the team with the better defense?)

So this type of model might aggregate these effects to improve accuracy.

PCA

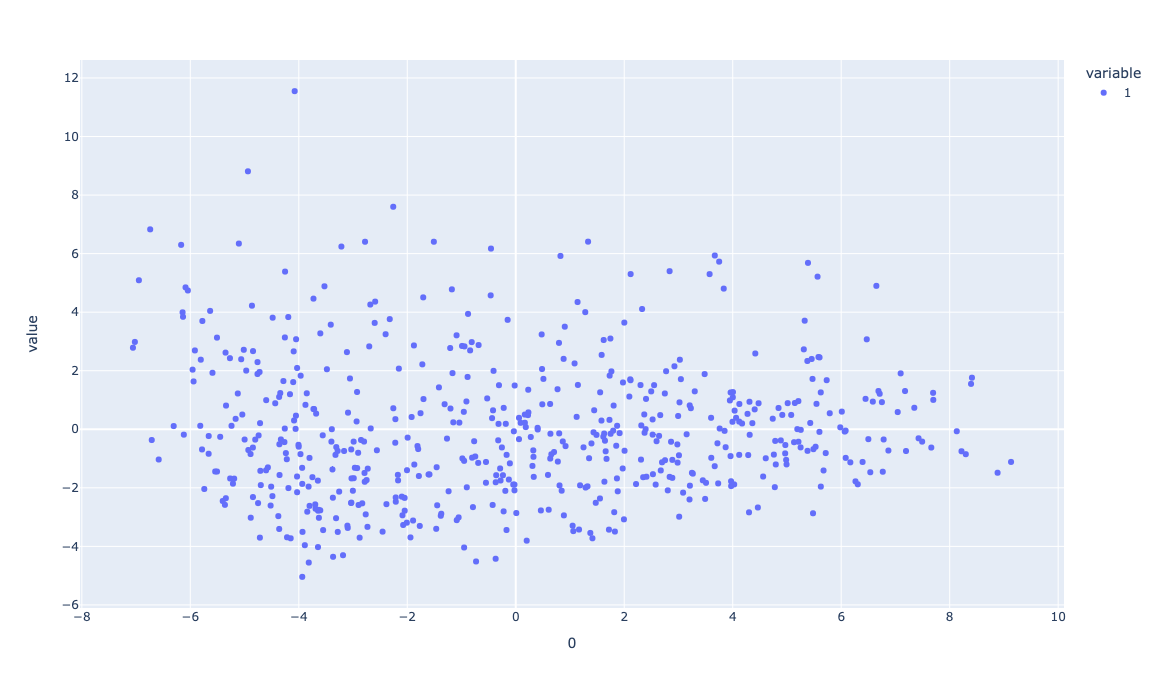

Principle Component Analysis attempts to capture most of the deviations of the model into a smaller number (usually 2) of dimensions.

This type of model is useful to reduce the dimensionality of the data. So in the case where a model has lots of variables, it can be useful to see the data to try to understand what the model is trying to fit along its dimensions. The PCA of my data looked like this:

Where the x-axis was the first component and the y axis was the second. You can see there is a lot of variation here! As such, and when forecasting in general, it may be helpful to avoid overfitting your model and create better predictions on average (which is what we are trying to do!) So maybe this type of reduction in dimensionality without loss of information might be useful in conjunction with a logistic regression, and lead to better accuracy.

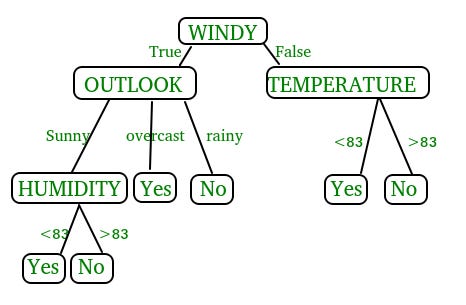

Decision tree

A decision tree is a type of machine learning algorithm which helps you narrow down your prediction based on probabilities of the data set.

This type of advanced model might be useful in a similar way to the KNN model, for various types of games (the big time underdog but talented 15 seed Oral Roberts against confident Kentucky last year could be a type of game based on the data!)

The data

Last year, I strictly used KenPom’s advanced basketball metrics data set. But there are a few problems that I went into detail on, such as the timing of the data and potential omitted variables. One thing I wanted to investigate for this year, was the potential effect of something KenPom’s data didn’t include: injuries.

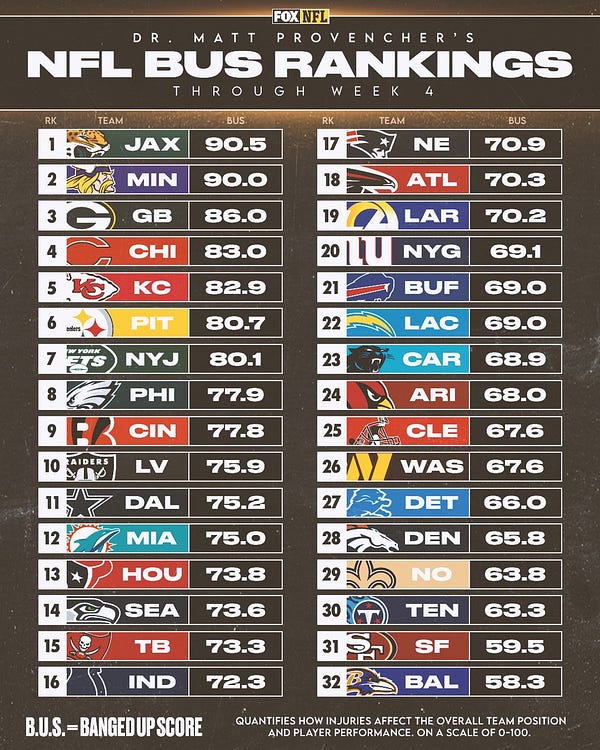

This was difficult since it’s difficult to come up with a measure for how injured a team is. For example, if 1 starter is out is that better or worse than if 2 bench players are out? The NFL does something like this, called the BUS, or banged up score, which indexes injuries by team.

To my knowledge, NCAA men’s basketball doesn’t have an equivalent, or at least one that is historicized, so I needed another measure for injuries. In addition, there are other factors besides injuries which might impact a teams performance. There is one measure I could think of which should incorporate injuries, as well as all other outside factors besides the X’s and O’s, like travel, fatigue, and experience, would be to use the betting lines for each game.

The money line for a game is the price of a wager. It effectively says that if you have a $1 bet, and your bet wins, you will get $ X back from the sports book. These lines can be read as probabilities, where a very low probability wager tends to lead to a higher payout.

However, using betting lines might be a bad proxy. There is likely a lot of measurement error in these lines due to the compounding nature of each of the effects, and although Vegas knows a lot it doesn’t know everything (like if the star player broke up with their significant other the night before! This reminds me of an anecdote from when I was playing basketball- sometimes players would find out who was dating who on the opposing team so they could talk trash about their girlfriends during the game).

In addition to measurement error, there is a lot of variance in these lines which could cause attenuation bias, or causing coefficients to be artificially low. Also since the line is primarily a function of how good the respective teams are, which is already captured by the KenPom data, it would be considered a Bad Control by Angrist and Pischke which conflates the effect of the KenPom data, and causing bias in the coefficients.

So we have two opposing forces here, omitted variable bias and bias due to the bad control. But there is an open question as to which effect is causing more error- to that, we look at the results.

The results

Unfortunately, the betting line data I could find only goes back until 2010 while the KenPom data dates back to 2002. So to make an apples to apples comparison, I cut the KenPom data from 2002-2019 to 2010-2019. And comparing the change in accuracy from the predictions of the two models on the 2022 tournament, I found roughly a 5% increase in accuracy from using the betting line data. Note that this doesn’t bring the total accuracy into the 90%’s, since the data was split beforehand. Comparing the 2002-2019 dataset model to the 2010-2019 you lose quite a bit of accuracy. So although it seems that the omitted variable bias was the larger effect here, it’s still better to use the model with the larger data set.

So a change in data didn’t help our goal of beating last year. But what about the more advanced models like KNN, PCA, and decision tree? A similar to story there. Some of models performed similarly in terms of accuracy to the logistic regression, but sometimes they dropped off quite a bit. To as low as 64% accuracy, which is about equivalent to getting all the 1’s and 2's seeds games correct and randomly guessing on the rest!

In addition, I did some more testing and found that the model that I trained last year, and tested in the high 80%, did quite poorly on the 2021 tournament (about 65%). So although I would have loved to sit down and write this blog telling you I can predict 90% consistently, I can’t. And likely last year’s predictions may be thought of as best case scenario. But, creating more robust models that perform consistently in the low 80% range is feasible, and so that is what I’m going to do this year. You can think of the trade off here as sacrificing some accuracy for some robustness. And it is still way more accurate than I would be able to do by myself!

Conclusion

A few big takeaways here should be that forecasting is hard, sometimes fancier models aren’t better, and the right data is hard to come by. This is part of the reason why KenPom’s data is so valuable, he carefully crafts his data to reflect the quality of the teams. And maybe over time the betting line data will become large enough to add to the model, but for right now I left it out. As for the rest of the particulars of the model, I’ll keep that proprietary for now- maybe I’ll post an update with the link to the code or dataset for a few TEZ :)

In the meantime if you want to follow along on the bracket picks you can do so below.

Note that this is completely model output here with no adjustments and does not factor in injuries or travel. This should not be used as betting advice.

Upps I used as a betting advice!!